AI Planning makes use of heuristics to search for well performing solutions. For the various different problem domains that can be tackled with AI planning, a user can choose from a variety of heuristics. Some of these heuristics are more suited to certain problem instances and domains than others. Thus, it has become common in AI planning to employ portfolio approaches to try and select the best suited heuristic for the problem at hand. However, such approaches do not take into account that during the search different heuristics might be better suited.

Dynamic algorithm configuration can be used to learn to switch between heuristics depending on some internal statistics of the planning system. For example, tracking simple statistics that show how the heuristics themselves perform allows DAC to learn dynamic policies that are capable of switching between heuristics during the search. We could theoretically and empirically show that such flexible dynamic policies are capable of outperforming the theoretical best possible algorithm selector.

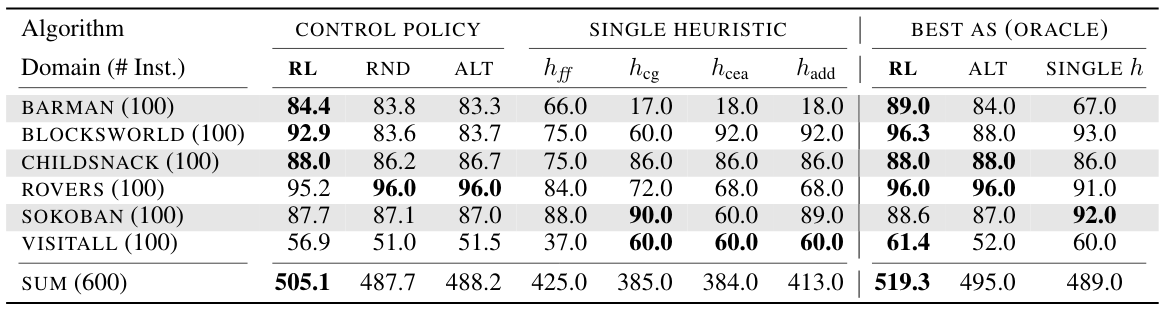

Number of solved problem instances. RL corresponds to a learned DAC policy. RND and ALT are two handcrafted dynamic policies. On the problem domains Barman and Childsnack the DAC policies (first column) outperform the theoretically best possible algorithm selector (last column).

References

- D. Speck and A. Biedenkapp and F. Hutter and R. Mattmüller and M. Lindauer

Learning Heuristic Selection with Dynamic Algorithm Configuration

In: Proceedings of the Thirty-First International Conference on Automated Planning and Scheduling (ICAPS’21)

Link to source code and data of using DAC to switch heuristics in AI planning.

Link to the video presentation for a workshop version of the paper presented at PRL@ICAPS’20.